A marketing team can waste months improving the wrong thing because no one checked how real prospects respond.

That problem shows up everywhere. A business owner picks the headline that sounds sharper. A sales manager rewrites a chatbot greeting because it reads better in a meeting. Someone shortens an Instagram DM flow because it feels faster. The campaign goes live, results are mixed, and no one can clearly say what helped or hurt.

The true cost is uncertainty.

When your marketing runs on opinion, every change is harder to trust. A good week might come from the offer, the timing, the audience, or pure luck. That makes growth hard to repeat, and repeatable growth is what turns marketing into a reliable source of leads and sales.

A/B testing gives you a more disciplined way to improve. You create two versions of one element, show them to comparable audiences, and measure which version gets more of the action you want, whether that is a click, a reply, a booked call, or a purchase. It works like a side-by-side product trial. Instead of asking your team which option they prefer, you check which option gets prospects to move.

That matters even more in conversational marketing, where small wording choices change how people feel in the moment. A website visitor may ignore a generic pop-up and still respond to a well-timed Messenger prompt. A prospect may abandon a chatbot after the first question if the opener feels robotic, but continue if the message sounds helpful and clear. In a DM flow, the order of questions can either build momentum or create friction.

Those details affect revenue. More replies can mean more qualified leads. Better qualifications can mean more booked calls. Smoother conversations can mean more sales without spending more to get attention in the first place.

A/B testing helps you improve those handoffs with evidence instead of guesswork.

Stop Guessing Start Growing

Marketing gets expensive fast when every choice is based on instinct.

You launch a landing page. Traffic arrives. Some people click, most don’t. You tweak the headline, then the button, then the form, but because you changed several things at once, you still don’t know what made the difference. That’s how teams burn time and budget without building any real learning.

A/B testing gives you a cleaner way to improve performance. You compare one version against another, hold the rest steady, and let the results show which option earns more clicks, leads, or sales. Instead of asking, “Which version do we like?” you ask, “Which version gets customers to act?”

That sounds simple, but it changes how a business grows.

Why this matters to revenue

If more people click your ad, more visitors reach your page. If more visitors start your chatbot, more prospects get qualified. If more qualified prospects reach checkout or book a call, revenue improves. A/B testing helps at each of those handoffs.

Here’s where people get stuck. They think testing is only for websites. It isn’t.

You can test an email subject line, a product page headline, a pop-up offer, a Messenger welcome message, an Instagram DM prompt, or the order of questions in a lead qualification flow. Anywhere customers make a decision, testing can help.

A/B testing is the discipline of replacing “I think this will work” with “customers showed us what works.”

That’s why strong marketers treat it as a growth habit, not a one-off tactic. They don’t chase perfection before launch. They launch a controlled test, learn, improve, and repeat.

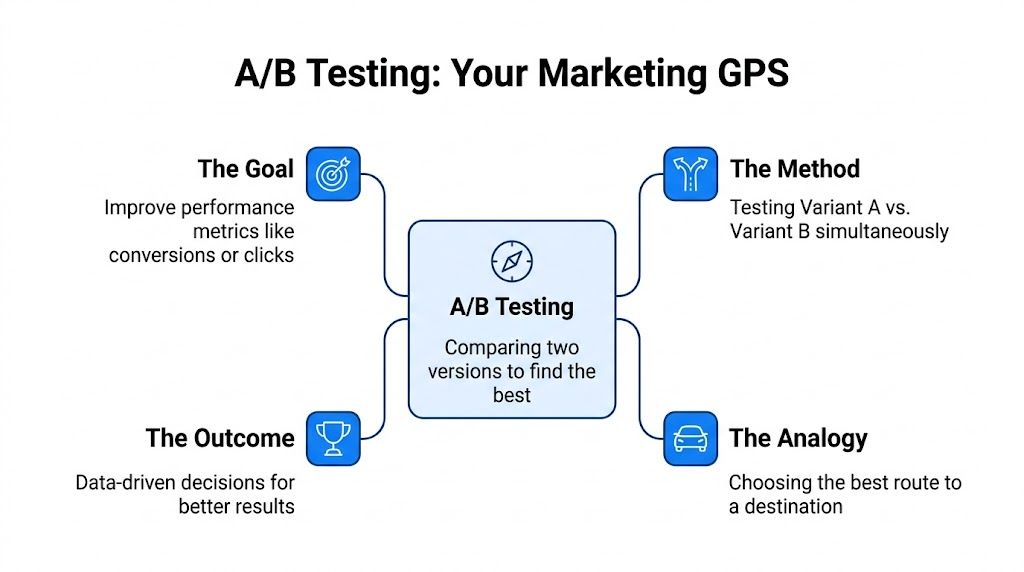

What Is A/B Testing in Simple Terms

Think of A/B testing like using a GPS when you have two possible routes to the same destination. Route A is the way you usually drive. Route B is an alternative. You don’t debate which one seems faster. You compare them and see which one gets you there better.

Marketing works the same way.

A/B testing, also called split testing, is when you show two versions of a marketing asset to different groups of people and measure which one performs better. The goal is to change just one important variable so you can tell what caused the result.

AB Testing in Marketing

The four parts that make A/B testing work

Every basic test has the same building blocks:

- Control. Version A is your current version, or the one you want to benchmark.

- Variant. Version B includes one deliberate change.

- Audience split. You divide traffic so that different people see different versions.

- Success metric. You decide what “better” means before the test starts.

If you’re testing a landing page, the metric might be form submissions. If you’re testing an email, it could be opens or clicks. If you’re testing a chatbot, it might be replies, button taps, lead completions, or purchases started.

What “single variable” actually means

This is the part many marketers misunderstand.

If you change the headline, image, and CTA all at once, and conversions go up, you won’t know which change produced the lift. A clean A/B test isolates one variable, so the result is easier to trust.

A straightforward example is a CTA test:

- Version A says “Get Started”

- Version B says “Book Your Free Demo”

Everything else stays the same.

In digital marketing, A/B testing is a controlled statistical experiment that isolates the causal impact of a single variable change on performance metrics. Directive Consulting notes that a test like CTA button color can reveal cause and effect, and the better variant can produce a 20% to 30% increase in click-through rates in some cases, as explained in its guide to what A/B testing means in digital marketing.

What this looks like in conversational marketing

A conversational test follows the same logic.

You might compare:

- Version A. “Hi, want help finding the right product?”

- Version B. “Hi 👋 Want me to help you pick the best product in under a minute?”

Same audience type. Same offer. Same destination. One changed message.

If Version B gets more replies or more product quiz starts, you’ve learned something useful about how your audience wants to be approached.

The core principle is simple. Let customer behavior decide, not your opinion.

If you want another plain-English explanation of what is A/B testing, that resource is a helpful companion to the idea above.

Why A/B Testing Is a Core Marketing Skill

A small change can have an outsized effect on revenue. One different headline, one clearer chatbot prompt, or one better-timed follow-up can be the difference between a visitor who disappears and a lead who books a call.

AB Testing in Marketing Career Choice

That is why A/B testing matters so much as a practical marketing skill. Every campaign includes decisions that shape results. Your headline sets expectations. Your offer frames value. Your CTA asks for action. Your follow-up sequence keeps momentum alive or lets it die. If those choices are based only on instinct, you are running your growth engine with guesswork in the driver’s seat.

The market has already shifted in this direction. A large share of businesses now run A/B tests on their websites, and major platforms conduct experiments constantly to improve performance. The lesson for a business owner is simple. Testing is no longer a specialist activity for giant companies with research teams. It is a normal way to make better marketing decisions with less risk.

What business owners actually gain from testing

The benefit is not “better marketing” in the abstract. It is more leads, more sales, and fewer wasted clicks.

- Higher conversion rates from the traffic you already have. If a landing page, email, or chatbot flow stops people at a certain step, testing helps you find the friction and remove it.

- Clearer insight into buyer motivation. You learn whether your audience responds better to speed, savings, social proof, reassurance, or convenience. That insight can improve your ads, emails, sales scripts, and onboarding.

- Safer decision-making. Instead of rolling out a major change to everyone, you can test it on a smaller segment first and avoid expensive mistakes.

- Stronger return on ad spend. If more visitors reply, book, or buy without increasing the budget, your marketing becomes more efficient.

- Better team alignment. Opinions matter less when customer behavior gives you the answer.

Good testing also depends on good tracking. If you cannot see where visitors came from, what page they viewed, or where they dropped off, it becomes much harder to test the right thing. A simple system for tracking website visitors and their behavior gives your tests a much stronger starting point.

Here’s a useful primer before you go deeper:

Why this matters even more in chat and messaging

Conversational marketing raises the stakes because the interaction feels personal. On a web page, a visitor can scroll past weak copy and keep exploring. In a Messenger, Instagram, or website chat flow, the message arrives like a live conversation. If the opening line feels generic, the button text feels unclear, or the follow-up comes too soon, the conversation can stall immediately.

A chatbot sequence works a lot like a sales rep’s first minute on a call. The wording has to build trust fast, clarify the next step, and keep the person engaged without sounding robotic or pushy. That makes A/B testing especially useful in conversational marketing.

You might test:

- an opener framed as help versus one framed as speed

- a quiz invitation versus a direct product recommendation

- one CTA button label versus another

- a follow-up sent after 5 minutes versus 30 minutes

- a short qualification flow versus a more guided one

The point is not to perfect copy for its own sake. The point is to improve the moments that move someone from casual interest to real intent.

Business takeaway: A/B testing helps you replace “I think this will work” with “We know this version gets more replies, more qualified leads, and more sales.”

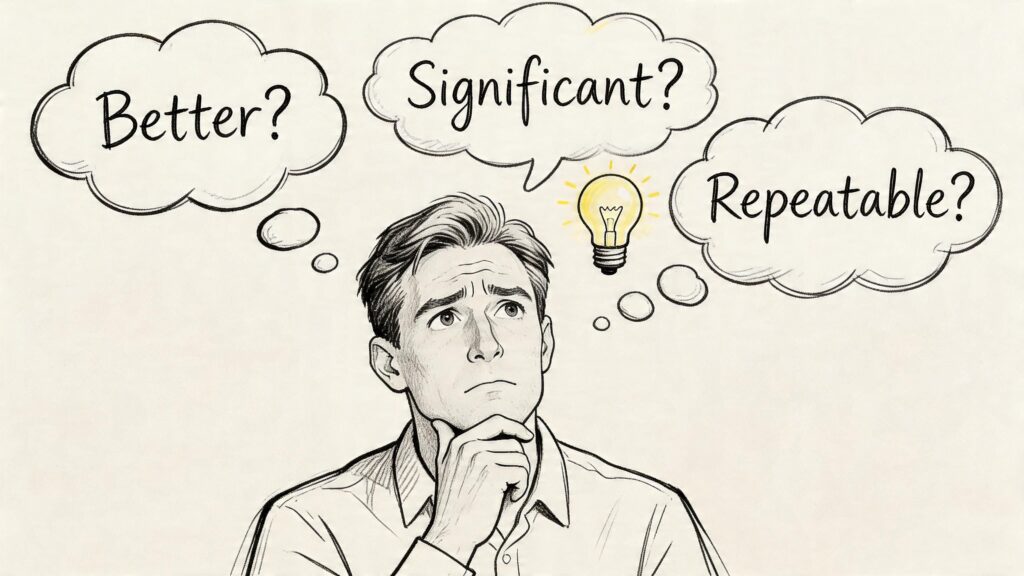

Understanding the Statistical Basics of A/B Testing

Statistics make a lot of marketers uneasy. They hear terms like significance, confidence, and power, then assume they need a data scientist to run a basic test well.

You don’t.

You do need to understand three practical questions. If you can answer them, you can avoid most bad testing decisions.

AB Testing in Marketing Decisions

Is the difference real or just random

Early in a test, results bounce around. One version looks ahead in the morning. The other catches up by evening. That doesn’t mean the audience is changing its mind. It usually means you don’t have enough data yet.

Statistical significance helps answer whether the observed difference is likely real rather than random noise. In plain language, it asks: if Version B looks better, is that because customers prefer it, or because the sample is still too small and unstable?

A lot of teams stop a test as soon as one version looks better. That’s a mistake.

If you pick a winner too early, you’re often rewarding randomness, not performance.

How confident are we in the result?

The 95% confidence threshold is frequently mentioned. You don’t need to memorize formulas to use that concept well. It means you want a high level of confidence before declaring that one version beats the other.

That matters because business decisions follow the result. You may change an ad creative, rewrite a lead form, or send more budget to a certain funnel step. The more confidence you need before acting, the less likely you are to roll out a false winner.

For website teams, clean tracking is part of this. If your analytics are messy, even a well-designed test can mislead you. That’s why having reliable visitor tracking matters before you interpret test outcomes. Clepher’s guide on tracking website visitors is a useful reference for getting that foundation right.

Did we test with enough people?

Many smaller brands struggle with this aspect.

For a baseline 5% conversion rate, achieving 80% statistical power often requires about 16,000 visitors per variant to detect a small but meaningful 0.5% lift, according to Oracle’s explanation of A/B testing and sample size. The lesson isn’t that every business needs that exact volume. The lesson is that small effects need larger samples.

If your traffic is low, you have two smart options:

- Test bigger changes so the difference is easier to detect

- Run the test longer so you gather enough data

Low-traffic brands often test tiny tweaks, get murky results, and conclude testing “doesn’t work.” Usually the test was just underpowered.

A simple way to think about good test hygiene

Use this mental checklist before you trust a result:

| Question | What you’re checking | Why it matters |

|---|---|---|

| Is one version clearly ahead? | Directional performance | Tells you whether there may be a meaningful difference |

| Has the test run long enough? | Stability over time | Reduces the chance of reacting to short-term swings |

| Did enough people see each version? | Sample size | Helps prevent inconclusive or misleading outcomes |

The practical rule for non-technical teams

You don’t need to calculate everything by hand. Most testing tools help with significance and sample thresholds. Your job is to respect the logic.

- Pick one main metric

- Change one important variable

- Let the test gather enough data

- Don’t call winners early

- Treat inconclusive results as information, not failure

An inconclusive test still teaches you something. It may tell you the change was too small, the audience was too mixed, or the metric wasn’t the right one.

Common A/B Testing Examples Across Your Marketing Funnel

The fastest way to understand A/B testing is to see where it fits in real campaigns. Most businesses have more testing opportunities than they realize.

A/B testing works anywhere a prospect has to make a decision. Click the ad or ignore it. Open the email or skip it. Start the chat or bounce. Finish the form or abandon it.

Website and landing page tests

These are the classic examples because the actions are easy to track.

You can test:

- Headlines that focus on speed versus savings

- CTA buttons that use different wording

- Page layouts that move testimonials higher or lower

- Forms with fewer versus more fields

- Product page images that show the product alone versus in use

If a page gets traffic but doesn’t convert, start with the element closest to the decision point. Usually that’s the headline, offer framing, form friction, or CTA.

Email and paid ad tests

Email is ideal for testing message framing. Ads are ideal for testing attention and click intent.

For email, common tests include:

- Subject lines

- Sender names

- Preview text

- CTA wording inside the email

- Plain text style versus designed layout

For paid campaigns, marketers often test:

- Primary ad copy

- Creative style

- Offer angle

- Call to action

- Audience-message fit

If you run paid acquisition and want a clearer view of how campaign variables affect traffic quality, reviewing how agencies structure and optimize PPC services can give useful context for what to test inside your ad funnel.

Conversational marketing tests that most brands overlook

Now, it gets interesting.

A chatbot or DM flow isn’t just a support tool. It’s a conversion path. People decide whether to reply, tap, share details, book, or buy based on the way the conversation unfolds.

Useful tests include:

- Opening message with a direct offer versus a friendly question

- Emoji use versus plain text

- Buttons versus open-ended reply prompts

- Product quiz path versus category menu

- Follow-up timing after someone drops off

- Urgency wording versus benefit wording

- Personalized greeting versus generic greeting

A strong result on one channel doesn’t automatically transfer to another. Adobe’s overview notes that a conversational prompt that gets 35% click-through on Instagram may perform very differently on WhatsApp because user expectations differ by platform, which is why channel-specific A/B testing matters.

A message that feels casual and engaging in Instagram DMs can feel intrusive in WhatsApp. Test by channel, not by assumption.

A/B test ideas by marketing channel

| Channel | Element to Test | Version A (Control) | Version B (Variant) |

|---|---|---|---|

| Website homepage | Hero headline | Benefit-led headline | Problem-led headline |

| Landing page | CTA button | “Get Started” | “Claim My Offer” |

| Product page | Social proof placement | Reviews are lower on the page | Reviews near CTA |

| Subject line | Curiosity-based | Direct offer-based | |

| Paid ads | Creative angle | Product-focused image | Lifestyle-focused image |

| Messenger bot | Welcome message | “Need help finding something?” | “Want me to recommend the right product?” |

| Instagram DM flow | First prompt | Button menu | Short question with reply options |

| WhatsApp campaign | Follow-up style | Reminder message | Value-led check-in |

| Lead capture flow | Qualification order | Ask budget first | Ask the goal first |

If you want to improve the quality of clicks before they ever hit your conversion point, Clepher’s guide on how to improve click-through rates pairs well with these testing ideas.

How to Plan and Run Your First A/B Test

Your first test doesn’t need to be complicated. It needs to be focused.

The easiest way to get good at A/B testing is to run one clean experiment, learn from it, and repeat the process. Don’t start with a full funnel redesign. Start with one decision point that clearly affects leads or sales.

A practical checklist

-

Choose one business metric that matters

Pick a metric tied to revenue, not vanity. Good starting points include lead form completions, booked calls, product page clicks, add-to-cart actions, email clicks, or chatbot lead captures.

If you test everything at once, you won’t know what success means.

-

Find the friction point

Look for a place where people hesitate. Maybe lots of visitors reach your landing page, but few submit the form. Maybe many users open your DM flow, but stop after the first message. That’s where a test can provide an advantage. -

Write a clear hypothesis

Keep it simple: if we change this one element, we expect this metric to improve because of this customer behavior.

Example: changing the first chatbot message from generic to benefit-led will increase replies because users will understand the value faster.

-

Build one variant only

Start with A versus B. One control, one challenger. Make one meaningful change.

Don’t rewrite the whole page. Don’t redesign the whole flow. Keep the experiment narrow enough that the result teaches you something specific.

Running the test without ruining it

A common and costly mistake is calling a winner too early. Salesforce notes that declaring a winner after insufficient data leads to false positives and weak decisions, which is why teams need proper sample size planning and should avoid acting on statistical noise, as discussed in its overview of A/B testing pitfalls and scientific rigor.

That warning should shape how you run the test.

- Set the metric before launch so you don’t cherry-pick a winner later

- Split the audience fairly so each version gets a comparable chance

- Let the test run long enough to collect stable data

- Watch for quality, not just volume, because more clicks don’t help if lead quality drops

What to do after the result

Once you have a trustworthy result, you have three possible outcomes:

- There’s a winner. Roll out the stronger version and document what you learned.

- There’s no clear winner. Keep the control or test a bigger change.

- The variant loses. That’s still useful. You just avoided scaling a weaker idea.

Practical rule: A losing test is still a productive test if it saves you from rolling out the wrong change.

If you want more ideas for what to optimize after your first experiment, Clepher’s article on conversion rate optimization techniques is a solid next read.

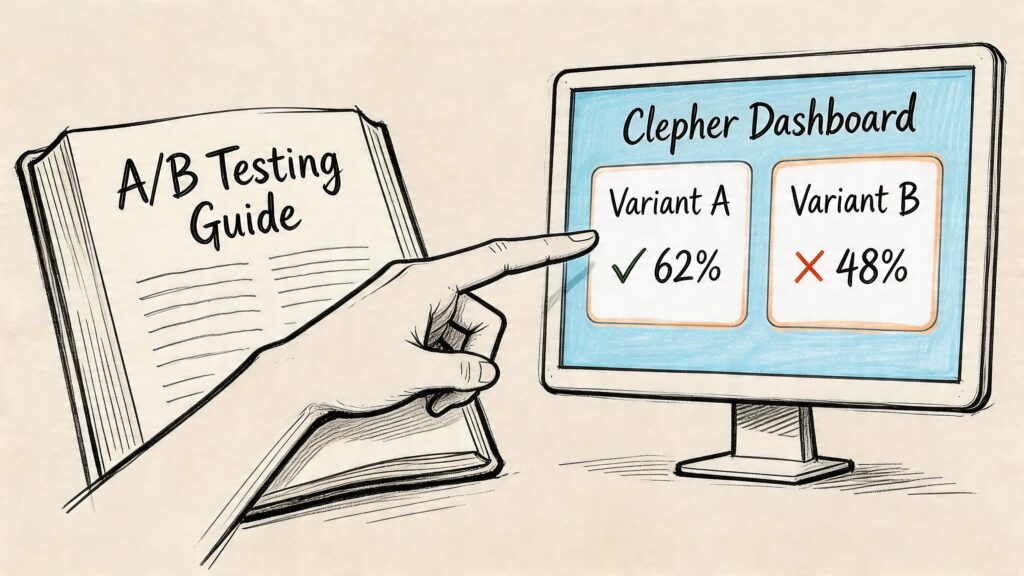

Supercharge Your Conversational Marketing with Clepher

Most articles about A/B testing stay stuck on web pages and email subject lines. That’s useful, but it misses one of the fastest places to improve conversions today: conversations.

When someone enters a Messenger flow, responds to an Instagram DM, or engages with an on-site bot, they’re already raising their hand. The job isn’t just to answer. It’s to guide that person toward the next valuable action.

That makes conversational marketing a strong fit for testing.

AB Testing in Marketing Clepher Dashboard

What to test inside a chatbot flow

A conversation has more moving parts than a static page, which gives you plenty of useful variables to test.

Examples include:

- Welcome message wording

- Question order

- Menu buttons versus free-text prompts

- Discount-first versus quiz-first lead capture

- Follow-up sequence timing

- Personalization tags versus generic copy

- Support tone versus sales tone

A product recommendation bot, for example, might test whether people respond better to a direct question like “What are you shopping for today?” or a guided menu such as “Shop by goal,” “Shop by budget,” or “Best sellers.” Both paths can work. The better one depends on your audience and channel.

A practical setup for a first conversational test

Say you want more qualified leads from Instagram DMs.

A sensible first test might look like this:

| Test part | Version A | Version B |

|---|---|---|

| First message | Friendly greeting with a generic offer | Benefit-led message that promises a quick recommendation |

| User path | Open question | Button choices |

| Main metric | Lead completions | Lead completions |

That setup keeps the objective clear. You’re not trying to optimize every message. You’re isolating the start of the conversation and measuring whether more people progress.

How a no-code platform helps

The benefit of a tool built for conversational flows becomes evident. Clepher lets teams design chatbot flows with a drag-and-drop builder, test entire flows or individual messages with A/B split testing, use random path distribution for alternative branches, and track actions such as clicks, replies, tags, and segment behavior across website chat, Facebook, Messenger, WhatsApp, and Instagram Direct Message.

That matters because conversational tests can get messy if you’re trying to manage branches manually. A structured builder makes it easier to keep the test clean and compare outcomes without changing several things by accident.

Metrics that matter in conversation

Don’t stop at open or view data. In chat, the strongest signals usually come from progression.

Look at metrics such as:

- Reply rate to the first message

- Button click rate on key options

- Lead capture completion

- Qualified lead tags applied

- Product page visits from chat

- Purchase intent actions, such as starting checkout or booking a call

A conversational flow can have a high engagement feel while still producing weak business outcomes. That’s why you should tie every test to a clear action that moves someone closer to a sale or qualification.

In chat-based marketing, the winning message isn’t the one that sounds smartest. It’s the one that gets the right person to take the next step.

Your Journey from Guesswork to Guaranteed Growth

A/B testing is bigger than a tactic. It’s a change in how you make marketing decisions.

Instead of asking your team to predict what customers will prefer, you give customers two choices and study what they do. That single shift improves more than conversion rates. It improves clarity. You stop making random edits, stop debating opinions endlessly, and start learning what moves buyers forward.

The good news is that you don’t need a huge team or a technical background to start. You need one meaningful metric, one controlled change, and the patience to let the data settle before you act.

If you’re a business owner, keep the first test small. Try one landing page headline. One email subject line. One chatbot opener. One follow-up message. If the result is positive, scale it. If the result is flat, test the next idea. If the result is negative, you still learned what not to roll out.

That’s how sustainable growth happens. Not through one perfect campaign, but through repeated decisions that get a little smarter over time.

For brands using conversational marketing, the upside is even bigger. Chat flows, DMs, and automated follow-ups create dozens of moments where a small improvement can increase replies, qualify better leads, and recover more lost sales. Testing turns those moments into measurable opportunities.

Start simple. Stay consistent. Let the audience decide.

If you’re ready to apply A/B testing inside chat, Clepher gives you a no-code way to build conversational flows, split traffic between variants, measure replies and clicks, and improve lead generation across your website, Messenger, WhatsApp, and Instagram DMs.